Why Your Data Collection Method Determines Research Success

Every groundbreaking research project starts with a critical decision: How will you collect your data? This choice shapes everything that follows—your findings’ validity, your research timeline, your budget requirements, and ultimately, whether you can answer your research questions effectively.

According to recent statistics, we’re creating 120 zettabytes of data annually, with projections reaching 181 zettabytes by 2025. However, quantity means nothing without quality. The wrong data collection approach generates incomplete, biased, or unreliable information that undermines even the most carefully designed studies.

Furthermore, research demonstrates that inappropriate data collection techniques directly impact research outcomes. Studies using mismatched methods often face rejection during peer review, wasted resources, and invalid conclusions. Conversely, well-chosen methods produce actionable insights that advance knowledge and inform decisions.

In this comprehensive guide, we’ll explore how to select the optimal data collection method for your specific research context. Additionally, you’ll discover practical frameworks for comparing different approaches, avoiding common pitfalls, and ensuring your data meets the highest quality standards.

Understanding the Data Collection Landscape

Before selecting a specific method, researchers must understand the fundamental categories that organize data collection approaches. This foundation enables informed decision-making throughout your research design process.

Primary vs. Secondary Data: The First Major Decision

Your initial choice involves determining whether to collect original data or use existing information. This decision significantly impacts your research timeline, budget, and the specificity of insights you can obtain.

Primary Data Collection

Primary data refers to information you gather directly from original sources specifically for your research questions. This approach provides current, relevant, and tailored information that addresses your exact needs.

Advantages of primary data include:

→ Complete control over data quality and collection procedures

→ Specific targeting of your precise research questions

→ Current information reflecting present conditions

→ Proprietary insights unavailable elsewhere

→ Exact specifications matching your analytical requirements

However, primary data collection demands more resources. You’ll invest significant time designing instruments, recruiting participants, managing data collection, and ensuring quality control. Moreover, costs typically exceed secondary data approaches, particularly for large-scale studies.

Secondary Data Collection

In contrast, secondary data involves using information previously collected by others—government agencies, research institutions, companies, or databases. According to research methodologists, secondary data provides efficient access to established datasets.

Benefits of secondary data include:

→ Cost effectiveness compared to original data collection

→ Time savings from immediate data availability

→ Large sample sizes often exceeding primary collection feasibility

→ Historical trends enabling longitudinal analysis

→ Benchmarking opportunities for comparative studies

Nevertheless, secondary data comes with limitations. The information may not perfectly match your research needs, definitions might differ from your requirements, and you have no control over collection quality. Additionally, data currency can be problematic if significant time has elapsed since collection.

Qualitative vs. Quantitative: Depth or Breadth?

The second fundamental distinction involves choosing between qualitative and quantitative approaches. This decision reflects your research questions’ nature and the type of understanding you seek.

Qualitative Data Collection

Qualitative methods focus on understanding the “why” behind behaviors, attitudes, and experiences. These approaches generate rich, narrative data revealing complex patterns and meanings.

Common qualitative methods include:

- In-depth interviews exploring individual perspectives

- Focus groups capturing group dynamics and interactions

- Observations documenting behaviors in natural settings

- Document analysis examining existing texts and materials

- Case studies providing detailed examinations of specific instances

According to PMC research, qualitative data allows researchers to explore complex issues in depth, providing comprehensive views of subjects under study. However, findings may be more subjective and less easily generalized to larger populations.

Quantitative Data Collection

Alternatively, quantitative methods gather numerical data suitable for statistical analysis. These approaches measure specific variables, identify patterns, and test hypotheses with mathematical rigor.

Typical quantitative methods include:

- Surveys with closed-ended questions yielding numeric responses

- Experiments manipulating variables to measure effects

- Structured observations counting specific behaviors

- Secondary data analysis of existing numeric datasets

- Content analysis quantifying communication patterns

Research shows that quantitative data enables objective statistical analysis providing clear, measurable results. Furthermore, studies indicate that numerical data facilitates easier comparison and generalization across populations and time periods.

Mixed Methods: Combining Strengths

Increasingly, researchers adopt mixed-methods approaches combining qualitative and quantitative techniques. This integration leverages each approach’s strengths while compensating for individual limitations.

For example, you might:

- Conduct exploratory interviews (qualitative) to inform survey development (quantitative)

- Use surveys (quantitative) to identify patterns, then interviews (qualitative) to understand causes

- Implement experiments (quantitative) with participant observations (qualitative) capturing context

- Analyze documents (qualitative) alongside statistical data (quantitative) for comprehensive understanding

Mixed-methods research provides holistic views of research problems. However, it requires expertise in multiple approaches, increases time demands, and complicates data integration and analysis.

The 8 Most Common Data Collection Methods Explained

Understanding specific data collection methods helps you identify which approaches suit your research context. Let’s explore eight widely used techniques, examining their characteristics, applications, and practical considerations.

Method #1: Surveys and Questionnaires

Surveys systematically gather information from individuals through standardized questions. This method represents one of the most versatile and widely used data collection approaches.

How surveys work:

Researchers design structured questionnaires with predetermined questions, then distribute them to target populations. Questions can be closed-ended (multiple choice, rating scales) for quantitative analysis or open-ended for qualitative insights.

Best applications:

- Measuring attitudes, opinions, or preferences across large populations

- Collecting demographic information efficiently

- Tracking changes over time through repeated surveys

- Gathering feedback on products, services, or experiences

Advantages:

→ Cost-effective for reaching large samples

→ Standardized responses enabling statistical analysis

→ Anonymous participation increasing honest responses

→ Scalable from small groups to thousands of participants

Limitations:

- Response rates often fall below 30%, potentially creating bias

- Question design significantly affects data quality

- Limited depth compared to qualitative methods

- Cannot clarify misunderstandings or explore unexpected topics

Pro tip: According to data collection experts, pilot testing surveys with small groups identifies confusing questions and technical issues before full deployment.

Method #2: In-Depth Interviews

Interviews involve direct one-on-one conversations between researchers and participants, enabling deep exploration of individual experiences, perspectives, and motivations.

Interview variations:

Structured interviews follow predetermined questions exactly, ensuring consistency across participants. Semi-structured interviews combine scripted questions with flexibility to explore emerging topics. Unstructured interviews flow conversationally, allowing participants to guide discussions naturally.

Ideal situations:

- Exploring sensitive topics requiring trust and rapport

- Understanding complex decision-making processes

- Gathering detailed life histories or experiences

- Investigating topics where little is known initially

Strengths:

→ Rich, detailed data capturing nuance and context

→ Flexible adaptation to individual participants

→ Clarification opportunities for unclear responses

→ Non-verbal cues providing additional insights

Challenges:

- Time-intensive for both collection and analysis

- Interviewer skills significantly impact data quality

- Difficult to generalize findings from small samples

- Potential interviewer bias influencing responses

Research from InnovateMR emphasizes that interviews excel when recruiting hard-to-reach audiences or capturing emotions and personal stories.

Method #3: Focus Groups

Focus groups bring together small groups (typically 6-12 participants) for moderated discussions about specific topics. This method leverages group dynamics to generate insights unavailable through individual approaches.

How focus groups function:

Trained moderators facilitate structured conversations, encouraging participants to share ideas, challenge perspectives, and build upon each other’s comments. Interactions reveal consensus, disagreements, and social influences on opinions.

Optimal uses:

- Testing reactions to products, services, or concepts

- Exploring cultural norms and collective attitudes

- Generating ideas for new initiatives or improvements

- Understanding how group dynamics influence individual opinions

Benefits:

→ Efficient gathering of diverse perspectives simultaneously

→ Interactive discussions sparking new insights

→ Observing real-time group dynamics and social influences

→ Cost-effective compared to equivalent individual interviews

Drawbacks:

- Dominant personalities may influence quieter participants

- Groupthink can suppress dissenting opinions

- Confidential topics limit appropriate subjects

- Complex logistics coordinating multiple schedules

Furthermore, qualitative research specialists note that focus groups work best when exploring topics where group perspectives matter more than individual experiences.

Method #4: Observation

Observational methods involve systematically watching and recording behaviors, interactions, or phenomena as they naturally occur. Unlike self-reported data, observations capture what people actually do rather than what they claim they do.

Observation types:

Participant observation requires researchers to actively engage with groups while observing. Non-participant observation involves watching without direct involvement. Structured observation uses predetermined categories and checklists, while unstructured observation takes more exploratory approaches.

Ideal applications:

- Studying behaviors difficult to self-report accurately

- Understanding workplace or social interactions

- Investigating cultural practices and rituals

- Capturing non-verbal communication and body language

Advantages:

→ Authentic data from natural settings

→ Non-verbal information complementing verbal reports

→ Contextual understanding of environmental influences

→ Behavior verification beyond self-reports

Limitations:

- Observer presence may alter natural behaviors

- Interpretation requires researcher judgment (potential bias)

- Time-consuming to observe sufficient instances

- Ethical considerations regarding consent and privacy

According to data collection research, observations determine dynamics of situations that generally cannot be measured through other techniques.

Method #5: Experiments and A/B Testing

Experimental methods manipulate one or more variables while controlling others to establish cause-and-effect relationships. This approach represents the gold standard for determining causation rather than mere correlation.

How experiments work:

Researchers systematically vary independent variables (treatments) and measure effects on dependent variables (outcomes). Random assignment to conditions and careful control minimize confounding factors.

Best suited for:

- Testing intervention effectiveness

- Comparing multiple approaches or strategies

- Isolating specific factors’ impacts

- Validating theories with controlled conditions

Strengths:

→ Causal conclusions through controlled manipulation

→ Internal validity from confound control

→ Replicable procedures enabling verification

→ Precise measurement of effect sizes

Weaknesses:

- Artificial conditions may limit real-world applicability

- Ethical constraints prevent manipulating certain variables

- Expensive and time-intensive implementation

- Participant reactivity to experimental situations

Research from ProfileSpider recommends using sample size calculators to ensure statistical power and running tests long enough for reliable results.

Method #6: Document and Content Analysis

Document analysis systematically examines existing texts, records, or materials to extract relevant information. This method accesses rich historical and contemporary data without primary collection costs.

Document sources:

Official reports, policy documents, organizational records, social media posts, emails, historical archives, newspaper articles, websites, and multimedia content all provide analyzable material.

Appropriate uses:

- Tracking changes over time in policies or attitudes

- Analyzing public discourse or media representations

- Investigating historical events or organizational histories

- Studying communication patterns or messaging strategies

Benefits:

→ Cost-effective using existing materials

→ Non-intrusive avoiding participant burden

→ Historical access to past events and contexts

→ Large-scale analysis of extensive materials

Constraints:

- Document quality and completeness vary

- Missing context limits interpretation

- Authenticity and bias require assessment

- Cannot ask clarifying questions of sources

Additionally, research methodologists emphasize that document analysis requires careful consideration of context and source credibility.

Method #7: Sampling Surveys

Sampling surveys select representative subsets from larger populations, enabling researchers to draw conclusions about entire groups without surveying everyone. This efficiency makes studying large populations practical and cost-effective.

Sampling approaches:

Random sampling gives each population member equal selection probability. Stratified sampling divides populations into subgroups, ensuring representation. Cluster sampling selects entire groups rather than individuals. Convenience sampling uses easily accessible participants.

Ideal scenarios:

- Studying large populations where complete enumeration is impractical

- Conducting national polls or public health surveys

- Estimating population parameters with precision

- Making generalizable inferences beyond the sample

Advantages:

→ Efficiency reducing costs and time dramatically

→ Generalizability when properly executed

→ Statistical inference about populations

→ Practicality for massive populations

Challenges:

- Sampling errors introduce uncertainty

- Non-response bias if certain groups don’t participate

- Complex calculations for sample size determination

- Representativeness depends on sampling technique quality

According to Georgetown Law Library research guides, random probability sampling provides the least biased selection method for large samples.

Method #8: Secondary Data Analysis

Secondary data analysis uses existing datasets collected by others—government agencies, research institutions, companies, or public databases. This approach efficiently leverages previous data collection investments.

Common sources:

Census data, government statistics, industry reports, academic datasets, organizational records, public health surveillance, economic indicators, and research repositories provide secondary data opportunities.

Best applications:

- Conducting longitudinal analyses across decades

- Comparing regions, countries, or time periods

- Accessing expensive or difficult-to-collect data

- Supplementing primary data with contextual information

Strengths:

→ Immediate availability starting analysis quickly

→ Large samples exceeding primary collection feasibility

→ Historical data for trend analysis

→ No participant burden using existing information

Weaknesses:

- Data may not perfectly match research questions

- Limited knowledge of collection procedures

- Variable definitions may differ from needs

- Cannot collect additional variables missing from datasets

Research from Paperpal notes that secondary data analysis proves ideal when primary collection isn’t feasible due to time or budget constraints.

The Decision Framework: Choosing Your Optimal Method

Selecting the right data collection method requires systematic evaluation of multiple factors. This framework guides you through critical considerations ensuring your choice aligns with research objectives and practical constraints.

Factor #1: Research Questions and Objectives

Your research questions fundamentally determine appropriate methods. Begin by examining what you’re trying to understand or measure.

Exploratory research questions investigating new phenomena or generating theories typically require qualitative methods. For instance, “How do patients experience telemedicine during pandemic restrictions?” demands interviews or observations capturing rich experiences.

Descriptive research questions documenting characteristics or patterns often use surveys or structured observations. Questions like “What percentage of students use mental health services?” need quantitative approaches yielding numeric answers.

Explanatory research questions establishing cause-and-effect relationships necessitate experimental designs. When asking “Does peer tutoring improve exam performance?” you need controlled experiments comparing tutored and non-tutored groups.

Evaluative research questions assessing programs or interventions may require mixed methods. “How effective is the new training program?” benefits from quantitative outcome measures combined with qualitative participant feedback.

According to selection criteria research, clearly defining research questions represents the first critical step in method selection.

Factor #2: Data Type Requirements

Consider what kind of data will actually answer your questions. This practical assessment prevents collecting interesting but irrelevant information.

Numeric data needs point toward quantitative methods. If you need to measure frequency, magnitude, or statistical relationships, surveys, experiments, or structured observations make sense.

Narrative data requirements indicate qualitative approaches. When understanding meanings, experiences, or processes matters more than quantities, interviews, focus groups, or document analysis prove appropriate.

Both numeric and narrative needs suggest mixed methods. Many research questions benefit from quantitative patterns combined with qualitative explanations of those patterns.

Furthermore, data collection experts emphasize that data type dictates methodological choices—quantitative data measures trends while qualitative data explores depth.

Factor #3: Population and Sample Considerations

Your target population’s characteristics significantly influence method feasibility and appropriateness. Carefully assess who you need to reach and how to access them effectively.

Population size affects method selection. Large populations (thousands or millions) favor surveys or secondary data analysis. Smaller populations (dozens or hundreds) enable more intensive methods like interviews.

Accessibility determines practical options. Easily reached populations (employees, students) support various methods. Hard-to-reach groups (homeless individuals, corporate executives) may require specialized recruitment or sampling strategies.

Literacy and language capabilities matter for self-administered surveys. Low literacy populations benefit from interviews or visual methods. Multiple languages present translation challenges requiring adaptation.

Technology access limits online methods. Populations without reliable internet require in-person, telephone, or postal approaches instead of web-based collection.

Availability and willingness to participate varies. Busy professionals might complete brief surveys but not lengthy interviews. Conversely, some populations welcome opportunities to share experiences in depth.

Research indicates that careful population assessment ensures selected methods remain feasible within access constraints.

Factor #4: Resource Availability

Honest resource assessment prevents selecting methods you cannot execute properly. Consider time, budget, expertise, and equipment realistically.

Time constraints vary dramatically by method. Secondary data analysis can begin immediately, while designing and implementing surveys requires weeks or months. Experiments and longitudinal studies demand even longer timelines.

Budget limitations eliminate certain options. Large-scale surveys, incentivizing participants, and specialized equipment require substantial funding. Conversely, document analysis and small-scale interviews fit modest budgets.

Researcher expertise affects quality. Unfamiliar methods require training or collaboration with specialists. For instance, proper focus group moderation demands specific skills often requiring professional facilitators.

Equipment and technology needs range from simple (paper surveys) to complex (specialized observation equipment, data collection software). Ensure you can access and afford required tools.

Personnel availability influences capacity. Solo researchers face different constraints than research teams. Data collection requiring multiple simultaneous locations necessitates adequate staff.

According to marketing research guidelines, budget constraints significantly influence method selection—online surveys prove more cost-effective than in-depth interviews or focus groups.

Factor #5: Validity and Reliability Needs

All research requires valid and reliable data, but standards vary by field and purpose. Understanding these requirements guides method selection.

Validity means measuring what you intend to measure. Face validity (appears appropriate), content validity (covers the construct), criterion validity (correlates with outcomes), and construct validity (aligns with theory) all matter.

Different methods offer varying validity strengths. Experiments excel at internal validity (causal conclusions) but may lack external validity (real-world applicability). Surveys provide broad validity but depth limitations. Observations capture authentic behaviors improving ecological validity.

Reliability refers to consistency—repeated measurements should produce similar results. Structured methods (standardized surveys, coded observations) generally offer higher reliability than unstructured approaches.

Furthermore, research design specialists emphasize that tools must measure intended constructs accurately and consistently, making validation and reliability testing essential.

Factor #6: Ethical Considerations

Ethical data collection protects participants while maintaining research integrity. Some methods raise specific ethical challenges requiring careful planning.

Informed consent requirements affect method selection. Observational studies in public spaces may not require explicit consent, while experiments involving risks necessitate detailed consent procedures.

Privacy and confidentiality concerns vary by topic sensitivity. Anonymous surveys enable honest responses about stigmatized topics. Conversely, interviews about sensitive subjects require robust confidentiality protections.

Participant burden influences ethical acceptability. Lengthy or repeated data collection must be justified by research importance. Minimizing burden while obtaining necessary data requires careful design.

Vulnerable populations (children, prisoners, cognitively impaired individuals) require special protections. Additional approvals and safeguards limit feasible methods.

Power dynamics between researchers and participants need consideration. Employment or educational relationships may coerce participation, requiring alternative recruitment strategies.

Research demonstrates that ethical practices remain paramount—ensuring informed consent, protecting privacy, and maintaining transparency about data use.

Comparing Methods: When to Use Each Approach

Direct comparisons help visualize which methods suit specific research scenarios. This section provides practical guidance matching methods to common research situations.

Use Surveys When:

✓ You need standardized data from large samples

✓ Measuring specific attitudes, opinions, or behaviors quantitatively

✓ Budget constraints prevent more expensive methods

✓ Participants are geographically dispersed

✓ Anonymous responses increase honesty about sensitive topics

Avoid surveys when:

- You need deep understanding of complex experiences

- Participants have low literacy or language barriers

- Topics require explanation or clarification

- Sample is too small for meaningful statistical analysis

Use Interviews When:

✓ Exploring complex individual experiences or perspectives

✓ Topics require building rapport and trust

✓ Flexibility to probe unexpected responses adds value

✓ Population size is manageable for intensive data collection

✓ Rich, detailed narratives answer research questions

Avoid interviews when:

- Large samples are required for generalization

- Budget or time severely constrained

- Standardized responses needed for comparison

- Researcher lacks interviewing skills or training

Use Focus Groups When:

✓ Group interactions and dynamics provide valuable insights

✓ Exploring cultural norms or collective attitudes

✓ Testing reactions to products, services, or concepts

✓ Time efficiency matters (gathering multiple perspectives simultaneously)

✓ Participants can comfortably discuss topics in groups

Avoid focus groups when:

- Topics are highly sensitive or personal

- Individual perspectives matter more than group dynamics

- Dominant personalities might suppress diverse views

- Coordination challenges prevent gathering participants

Use Observations When:

✓ Behaviors are better understood through watching than asking

✓ Participants might not accurately self-report actions

✓ Environmental context significantly influences phenomena

✓ Non-verbal communication provides important insights

✓ Naturalistic data collection enhances validity

Avoid observations when:

- Behaviors occur rarely or unpredictably

- Internal experiences (thoughts, feelings) are primary interest

- Observer presence substantially alters behaviors

- Ethical concerns about consent or privacy arise

Use Experiments When:

✓ Establishing causation is essential

✓ Variables can be manipulated ethically

✓ Control over confounding factors is possible

✓ Precise effect measurement matters

✓ Resources support controlled implementation

Avoid experiments when:

- Ethical constraints prevent manipulation

- Naturalistic settings are crucial

- Budget or time inadequate for proper controls

- External validity outweighs internal validity importance

Use Secondary Data When:

✓ Existing datasets address research questions adequately

✓ Primary collection is impossible or impractical

✓ Historical or comparative analysis is needed

✓ Budget severely limited

✓ Large-scale data exceeds primary collection capacity

Avoid secondary data when:

- Available data don’t match research needs closely

- Data quality is questionable or unknown

- Currency is critical and data are outdated

- Specific variables essential to research are unavailable

According to comprehensive method guides, understanding each method’s strengths and limitations helps collect appropriate data for specific needs.

Common Mistakes to Avoid

Even experienced researchers sometimes make data collection errors that compromise study quality. Recognizing these pitfalls helps you avoid them proactively.

Mistake #1: Method-Driven Rather Than Question-Driven Selection

Perhaps the most common error involves choosing methods based on familiarity or preference rather than research question requirements. Researchers sometimes select approaches they know well, even when other methods would serve objectives better.

How to avoid this:

Start with research questions, not methods. Explicitly map each question to appropriate data types and collection approaches. Then select methods matching those requirements, even if they push you outside comfort zones.

If necessary methods exceed your expertise, seek collaborators, training, or consultants rather than forcing inappropriate familiar methods onto mismatched questions.

Mistake #2: Inadequate Pilot Testing

Launching data collection without thorough pilot testing frequently leads to discovering problems only after investing substantial resources. Surveys with confusing questions, interview protocols missing key topics, or observation frameworks lacking important categories all result from insufficient testing.

How to avoid this:

Always pilot test instruments and procedures with small samples resembling your target population. Analyze pilot data to identify ambiguities, technical problems, or missing elements. Revise instruments based on pilot findings before full implementation.

Additionally, research best practices recommend pre-testing data collection instruments with small groups to identify potential issues before full deployment.

Mistake #3: Ignoring Sample Size Requirements

Collecting insufficient data undermines statistical analyses and limits generalizability. Many researchers underestimate sample sizes needed for adequate statistical power or qualitative saturation.

How to avoid this:

For quantitative studies, conduct power analyses before data collection determining minimum sample sizes for detecting expected effects. Account for anticipated non-response or attrition when setting recruitment targets.

For qualitative research, plan for theoretical or thematic saturation—continuing data collection until new information stops emerging. This typically requires 12-20 interviews or 3-6 focus groups, though complexity varies by topic.

Mistake #4: Poor Timing and Scheduling

Collecting data during inappropriate times—when participants are unavailable, stressed, or when events influence responses—can skew results substantially. Holiday periods, examination weeks, or crisis situations affect response rates and answer quality.

How to avoid this:

Research your population’s calendars and schedules before planning data collection. Avoid known busy periods, holidays, or predictable stressors. Allow sufficient time for recruitment and participation without rushing participants.

Furthermore, build flexibility into timelines accommodating unexpected delays. Rigid schedules often force compromises undermining data quality.

Mistake #5: Insufficient Researcher Training

Data collection quality depends heavily on researcher competence. Untrained interviewers, inconsistent observers, or poorly prepared survey administrators introduce errors and bias reducing data reliability.

How to avoid this:

Invest in thorough training for everyone involved in data collection. Develop detailed protocols and practice procedures until consistency is achieved. For multi-site studies, ensure standardization across all locations.

Monitor data collection quality throughout the process through regular checks and debriefings. Address problems immediately rather than discovering them during analysis.

Mistake #6: Neglecting Data Management Planning

Many researchers focus entirely on collection while ignoring how they’ll organize, store, and prepare data for analysis. This oversight creates chaos when analysis begins.

How to avoid this:

Develop data management plans before collection starts. Specify file naming conventions, storage locations, backup procedures, and confidentiality protections. Create codebooks defining all variables.

Plan data cleaning and quality checking procedures. Identify how you’ll handle missing data, outliers, and inconsistencies. These preparations streamline analysis and prevent data loss disasters.

Ensuring Data Quality and Reliability

High-quality data collection requires attention to quality throughout the process. These practices help maintain standards ensuring your data supports valid conclusions.

Strategy #1: Standardize Procedures

Consistency in data collection reduces variability from procedural differences. Standardized approaches enable comparison across participants, time points, and locations.

Implementation approaches:

Develop detailed written protocols documenting every data collection step. Train all personnel using these protocols until they can execute them identically. Use checklists ensuring critical steps aren’t omitted.

For surveys, maintain identical question wording and order across all participants. During interviews, follow structured guides while allowing necessary flexibility. In observations, apply coding schemes consistently.

According to data quality research, following standardized data collection methods ensures accuracy and enables proper comparison.

Strategy #2: Implement Quality Checks

Regular quality monitoring identifies problems early when they’re fixable. Waiting until analysis reveals issues wastes resources and may force data collection repetition.

Quality check methods:

Review data collection materials (completed surveys, interview recordings, field notes) regularly for completeness and consistency. Check for missing data patterns indicating systematic problems.

Monitor response rates and participant characteristics ensuring representation. Conduct random audits of data entry accuracy. Hold debriefing sessions with data collectors identifying challenges and solutions.

Furthermore, establish clear quality thresholds. For example, if more than 10% of survey respondents skip a question, investigate whether the question is confusing or sensitive.

Strategy #3: Maintain Detailed Documentation

Comprehensive documentation enables transparency and replication while helping you remember critical decisions months later during analysis and writing.

What to document:

Record all methodological decisions and their rationales. Document changes made during data collection explaining why modifications occurred. Keep logs of data collection dates, locations, and personnel.

Preserve original data in addition to cleaned versions. Maintain audit trails showing all data transformations. These records prove invaluable when reviewers question procedures or when attempting to replicate findings.

Research from Georgetown Law Library emphasizes that documenting data collection methods makes ultimate conclusions more credible by allowing evaluation of methods for flaws and biases.

Strategy #4: Address Missing Data Appropriately

Missing data occurs in virtually every study. However, how you handle it significantly affects analysis validity.

Missing data approaches:

First, minimize missing data through careful design, clear instructions, and follow-up procedures. Nevertheless, some missingness is inevitable.

Analyze missing data patterns. Is data missing completely at random, missing at random, or missing not at random? Different patterns require different analytical strategies.

For surveys, consider whether questions generating frequent non-response need revision. In interviews, probe gently when participants skip topics. Document reasons for missing data when known—this contextual information aids interpretation.

Strategy #5: Ensure Confidentiality and Security

Protecting participant privacy maintains trust and meets ethical obligations. Data breaches damage research reputation and may harm participants.

Security measures:

Store data securely with access limited to authorized personnel. Use encryption for digital data and locked cabinets for physical materials. Remove or code identifying information as soon as feasible.

Develop protocols for data transfer and sharing. Train team members on confidentiality requirements. Plan data retention and destruction schedules complying with institutional and legal requirements.

Moreover, ethical data practices require protecting privacy, building trust, mitigating breach risks, and complying with regulations like GDPR.

Emerging Trends in Data Collection

Data collection methods continue evolving with technological advances and changing research needs. Understanding emerging trends helps researchers adopt innovative approaches improving efficiency and insights.

Trend #1: Digital and Mobile Data Collection

Traditional paper-based methods are rapidly giving way to digital approaches. Mobile devices, tablets, and smartphones enable real-time data collection with built-in quality checks and immediate uploads.

Digital advantages:

Automatic skip logic ensures participants only see relevant questions. Real-time validation prevents impossible responses (birthdates in the future, ages under zero). GPS coordinates automatically document collection locations. Multimedia capabilities capture photos, audio, or video easily.

Furthermore, digital methods eliminate data entry errors from transcribing paper forms. Data becomes immediately available for analysis. Costs decrease substantially for large-scale studies.

Popular platforms include Qualtrics, Google Forms, SurveyMonkey, and specialized research apps. These tools democratize sophisticated data collection previously requiring extensive programming.

Trend #2: Passive and Sensor-Based Collection

Passive data collection captures information without requiring active participant responses. Sensors, wearables, and tracking technologies monitor behaviors, locations, and physiological states continuously.

Applications:

Fitness trackers monitor activity levels, sleep patterns, and heart rates automatically. Smartphone apps track location, screen time, and communication patterns. Environmental sensors measure air quality, noise levels, or light exposure.

These approaches reduce participant burden while capturing granular temporal data impossible through traditional methods. However, they raise privacy concerns requiring careful ethical consideration and transparent consent procedures.

Trend #3: Social Media and Digital Trace Data

Social media platforms, websites, and digital services generate massive behavioral datasets. Researchers increasingly leverage these “digital traces” for insights into opinions, networks, and activities.

Research opportunities:

Twitter/X data reveals public discourse and sentiment. Facebook data illuminates social networks and information diffusion. Online search data indicates population-level interests and concerns. Website analytics show user behaviors and preferences.

Nevertheless, digital trace data requires careful interpretation. Online populations don’t represent general populations. Privacy and ethical considerations complicate access and use. Platform changes can disrupt data availability unpredictably.

Trend #4: Artificial Intelligence and Automated Analysis

AI technologies are transforming not just data analysis but collection itself. Natural language processing, image recognition, and machine learning enable automated coding and pattern detection.

AI applications:

Sentiment analysis automatically codes open-ended responses. Speech recognition transcribes interviews instantly. Image recognition codes photos or videos. Chatbots conduct structured interviews 24/7.

These technologies increase efficiency dramatically. However, they require validation ensuring accuracy matches human coding. Bias in AI systems can amplify collection and analysis problems.

Trend #5: Participatory and Citizen Science Approaches

Traditional researcher-driven collection is supplemented by participatory methods engaging communities as co-researchers. Citizen science projects recruit public volunteers collecting data at scales impossible for professional researchers alone.

Examples:

Environmental monitoring by community volunteers. Health tracking through patient-reported outcomes. Historical archive transcription by public contributors. Biodiversity observations through apps like iNaturalist.

Participatory approaches democratize research, increase cultural appropriateness, and build community capacity. However, they require careful quality control and raise questions about authorship and credit.

Practical Implementation: Your Step-by-Step Guide

Moving from method selection to actual implementation requires systematic planning. This guide walks you through essential steps ensuring successful data collection.

Step 1: Finalize Research Design

Before collecting any data, completely specify your research design. Vague plans inevitably lead to problems during collection and analysis.

Design specifications:

Document research questions, hypotheses, or objectives clearly. Define all variables including operational definitions explaining exactly how you’ll measure abstract constructs.

Specify your sampling strategy including population, sample size calculations, and selection procedures. Outline your analysis plan—knowing how you’ll analyze data ensures you collect what you actually need.

Create a detailed timeline for all research phases. Identify dependencies between tasks and build in buffers for inevitable delays.

Step 2: Develop Data Collection Instruments

Instrument development deserves substantial time and attention. Quality instruments are tested, revised, and retested before use.

Development process:

For surveys, draft questions ensuring clarity, avoiding leading language, and using appropriate response scales. Arrange questions logically with important items early.

For interviews, develop discussion guides with key topics, sample questions, and probes for deeper exploration. Balance structure ensuring comparability with flexibility allowing natural conversation.

For observations, create coding schemes defining categories precisely. Develop recording forms or checklists streamlining documentation. Establish inter-rater reliability procedures if multiple observers are involved.

According to instrument development research, creating surveys, interview guides, or observation protocols requires pre-testing with small groups identifying issues before full deployment.

Step 3: Obtain Ethical Approvals

Never begin data collection without required ethical approvals. Institutional Review Boards (IRBs) or ethics committees evaluate research protecting participant welfare.

Approval requirements:

Prepare detailed protocols describing procedures, risks, benefits, and confidentiality protections. Develop informed consent materials explaining research clearly in accessible language.

Submit applications allowing adequate review time (often 4-8 weeks minimum). Respond promptly to reviewer questions or requests for modifications.

Only begin recruitment and data collection after receiving formal approval. Follow approved protocols exactly—any changes require amendment approval before implementation.

Step 4: Pilot Test Thoroughly

Pilot testing represents your last opportunity to identify and fix problems before full data collection. Invest time here to avoid much larger problems later.

Pilot testing procedures:

Recruit 10-30 participants similar to your target population. Administer your instruments exactly as planned for full study. Debrief participants about confusing questions, technical problems, or concerning elements.

Analyze pilot data checking for unexpected patterns, ceiling or floor effects, or excessive missing data. Review whether collected data actually addresses research questions.

Revise instruments based on pilot findings. If changes are substantial, conduct additional pilot testing. Only proceed to full data collection when pilot results show your approach works well.

Step 5: Execute Data Collection

Finally, implement your carefully planned data collection. However, implementation isn’t automatic—active monitoring ensures quality throughout.

Implementation practices:

Train all data collection personnel thoroughly. Conduct regular team meetings discussing challenges and solutions. Monitor response rates, completion rates, and data quality metrics.

Document any deviations from planned procedures immediately. Solve problems quickly before they affect substantial data portions.

Maintain participant communication through reminders, thank you messages, and updates. Respectful, professional interactions support high response rates and quality participation.

Step 6: Prepare Data for Analysis

As data collection concludes, systematic preparation for analysis prevents chaos and errors.

Data preparation tasks:

Create well-organized datasets with clear variable names and labels. Code open-ended responses using systematic procedures. Check data for errors, outliers, and inconsistencies.

Document all data cleaning decisions. Handle missing data using appropriate methods. Create audit trails allowing others to understand your decisions.

Store data securely with backups in multiple locations. Prepare documentation (codebooks, methodological notes) needed for analysis and eventual sharing.

Conclusion: Making Your Decision with Confidence

Selecting the right data collection method represents one of your research’s most consequential decisions. This choice shapes everything downstream—your findings’ quality, your resource investment, your timeline, and ultimately whether you can answer your research questions convincingly.

Throughout this guide, we’ve explored fundamental distinctions between primary and secondary data, qualitative and quantitative approaches, and the eight most common collection methods. Moreover, we’ve examined critical decision factors including research questions, data requirements, population characteristics, resources, validity needs, and ethical considerations.

Remember these essential principles as you decide:

Your research questions should drive method selection, not your familiarity or preferences. Inappropriate methods, however expertly executed, cannot answer questions they’re unsuited for.

No single method is universally superior. Surveys, interviews, observations, experiments, and secondary data each excel in specific contexts while presenting limitations in others. Understanding these trade-offs enables informed choices.

Resource constraints are real and must be acknowledged. The theoretically ideal method that exceeds your budget, timeline, or expertise will fail. Better to execute a feasible approach well than a perfect approach poorly.

Quality matters more than quantity. Smaller samples of high-quality data outweigh larger samples of questionable reliability. Invest in pilot testing, training, and quality monitoring.

Mixed methods often provide the most comprehensive insights. Combining quantitative and qualitative approaches, or primary and secondary data, addresses limitations inherent in single-method designs.

Ethical data collection protects both participants and research integrity. Shortcuts that compromise privacy, consent, or welfare ultimately undermine your work’s credibility and value.

Finally, remember that data collection isn’t an isolated task—it connects to everything before (research design, literature review) and after (analysis, interpretation, dissemination). Thoughtful method selection considering these connections strengthens your entire research enterprise.

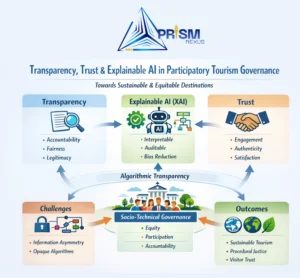

At PRISM Nexus, we specialize in helping researchers navigate these complex decisions. Our team provides expert consultation on method selection, instrument development, data collection implementation, and quality assurance. Whether you’re designing your first study or refining approaches for established research programs, we offer customized support ensuring your data collection succeeds.

Ready to Strengthen Your Research Data Collection?

Choosing and implementing optimal data collection methods requires expertise, planning, and resources. PRISM Nexus offers comprehensive support including:

→ Method selection consultation – Expert guidance matching approaches to research contexts

→ Instrument development – Creating valid, reliable surveys, interviews, and protocols

→ Quality assurance – Establishing procedures ensuring data integrity

→ Training and capacity building – Preparing teams for successful implementation

→ Mixed-methods expertise – Integrating quantitative and qualitative approaches

Contact us today to discuss how we can help ensure your research data collection delivers the insights you need.

Frequently Asked Questions About Data Collection Methods

Q: How do I decide between qualitative and quantitative data collection?

A: Your research questions determine this choice. Use qualitative methods (interviews, focus groups, observations) when exploring the “why” behind phenomena, understanding complex experiences, or generating new theories. Choose quantitative methods (surveys, experiments, structured observations) when measuring specific variables, testing hypotheses, or generalizing to larger populations. Many studies benefit from mixed methods combining both approaches.

Q: What’s the minimum sample size needed for surveys?

A: This depends on your analysis goals and population size. For simple descriptive statistics, 100-200 participants often suffice. For detecting statistical differences between groups or correlations, conduct power analyses determining minimum sizes for adequate statistical power (typically 80% or higher). Margins of error also depend on sample size—±3% requires approximately 1,000 responses, while ±5% needs about 400.

Q: How many interviews are enough for qualitative research?

A: Qualitative research typically requires 12-20 interviews for thematic saturation, though this varies by topic complexity and diversity. Continue conducting interviews until new information stops emerging—when you can predict what participants will say, you’ve likely reached saturation. More homogeneous populations may saturate earlier; diverse populations require more interviews.

Q: Can I use secondary data for my entire study?

A: Yes, if existing datasets adequately address your research questions. Many excellent studies use only secondary data—government statistics, archival records, or publicly available datasets. However, ensure data quality, relevance, and currency meet your needs. Often, combining secondary data with targeted primary collection provides the strongest approach.

Q: What’s the biggest mistake researchers make in data collection?

A: Choosing methods based on familiarity rather than research question requirements. Researchers often default to surveys because they seem easy, or avoid mixed methods due to complexity concerns. The most costly mistake involves collecting data that doesn’t actually answer your research questions. Always work backward from questions to methods, not forward from methods to questions.

Q: How much should I budget for data collection?

A: Costs vary enormously by method and scale. Secondary data analysis might cost only researcher time. Small-scale interview studies ($2,000-$10,000) include incentives, transcription, and analysis software. Large surveys ($10,000-$100,000+) involve sampling, programming, incentives, and fielding. Experiments requiring specialized equipment or facilities cost more. Budget 30-50% of total research costs for data collection.

Q: Do I need ethics approval for all data collection?

A: Most research involving human participants requires Institutional Review Board (IRB) or ethics committee approval. This includes surveys, interviews, observations, and experiments. Some secondary data analysis using de-identified public datasets may be exempt, but check with your institution’s ethics office. Never assume exemption—always submit for review. Collecting data without required approval violates ethical standards and may prevent publication.

Share this guide with fellow researchers to help them select optimal data collection methods for rigorous, impactful studies.