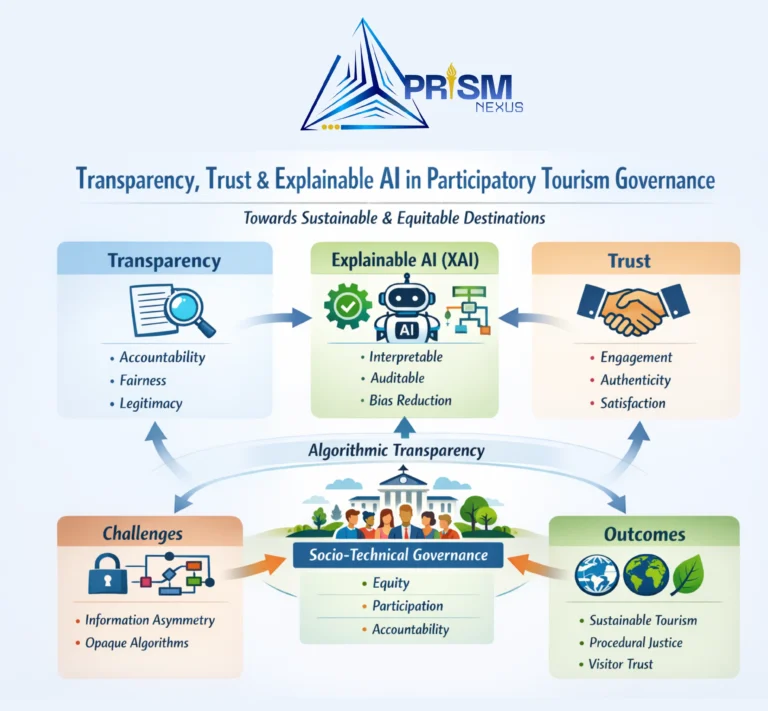

Transparency, Trust, and Explainable Artificial Intelligence in Participatory Tourism Governance: A Socio-Technical Perspective for Sustainable and Equitable Destinations.

Key highlights:

- Transparency is essential for sustainable tourism governance, as it strengthens accountability, fairness, and institutional legitimacy among governments, platforms, communities, and tourists.

- Information asymmetry and opaque digital algorithms weaken participatory tourism governance, limiting community engagement and reinforcing power imbalances in tourism decision-making.

- Explainable Artificial Intelligence (XAI) enhances transparency by making algorithmic decisions interpretable, enabling auditing, reducing bias, and improving stakeholder confidence in AI-driven tourism platforms.

- Technical transparency alone is insufficient, as XAI without strong governance and accountability frameworks may result in “transparency-washing” rather than genuine procedural justice.

- Integrating transparency, trust, and XAI within a socio-technical governance framework can improve legitimacy, stakeholder trust, and sustainable tourism outcomes while promoting responsible tourist behavior.

Overview:

The growing accountability expectations, fast technological advancements, and escalating environmental needs are transforming the governance of tourism. Trust is now a critical bedrock to long-term legitimate and equitable sharing of benefits as destinations are (or become) digital and participative (Organization for Economic Co-operation and Development (OECD) 2024a). Participatory governance must have clear information channels and proactive cooperation between stakeholders. However, many programmed fails in the end due to information asymmetries, black box algorithms, and a lack of community engagement (Dangi & Petrick, 2021). This review paints the case that transparency has to be taken out of the disclosure stage to the action stage, with a direct impact of reinforcing stakeholder trust and an indirect impact of reinforcing visitor trust and loyalty.

Explainable Artificial Intelligence (XAI) is a potentially significant initiative to enhance trust and transparency of digital tourism systems. XAI illustrates decision-making by algorithms, which enables stakeholders to assess fairness and procedural justice (Xu, Wang, and Kim, 2025). The review will argue that, although XAI proposes a new technical solution to the issue of algorithmic opacity, it also brings along far-reaching institutional problems. In the absence of effective governance structures to promote a high degree of true accountability, XAI will easily turn into an instrument of transparency-washing (Cheong, 2024). As a result, this review analyses the alignment of transparency, trust, and XAI as an intertwined socio-technical system in affecting justice and sustainability in participatory tourism.

The concept of transparency is at the forefront of sustainable tourism governance and the advancement of accountability, fairness, and institutional legitimacy (Roxas, Rivera and Gutierrez 2020; Tuomi and Ascencao 2023). Governance of participatory tourism is based on participants (States, communities, and platforms) making a joint decision, transparent information flows provide equal dividends and ensure long-term legitimacy (Kim and Lee 2022; Petrick 2021). The disparity in the application of transparency occurs because of the information asymmetry, the lack of transparency in the algorithms, and inadequate engagement with the community (Dangi & Petrick, 2021).

Explainable AI (XAI) interprets the choices made by robotized systems in digital tourism, meaning that the systems must comply with the principles of fairness and responsibility (Xu, Wang and Kim 2025; Jin et al. 2023). The use of XAI in decision-making promotes auditing and minimizes bias and, therefore, enhances the confidence of stakeholders in AI-based platforms (OECD 2024b; Bingol and Yang 2025). The application of XAI leads to the achievement of success when well-established data governance and clear accountability structures are in place to transform transparency into procedural fairness (Cheong 2024).

Transparency and trust support one another, affecting the perceptions of tourists towards authenticity and overall satisfaction (Dangi & Petrick, 2021; Singh, Lee and Tsai, 2025). In the case of transparency and trust institutionalized, they support responsible tourist behavior that is based on sustainability goals and reduces opportunistic desistance to greenwashing (Li et al. 2025; Wasaya, Prentice & Hsiao 2024). Transparency, trust and explainable artificial intelligence (XAI) housed within an integrative framework can enhance legitimacy, equity, and resilience in the process of governance of participatory tourism (Bingol & Yang 2025).

Digital accountability in Sustainable Tourism Governance

The concept of digital accountability is at the forefront of sustainable tourism governance and the advancement of transparency, fairness, and institutional legitimacy (Roxas, Rivera and Gutierrez 2020; Tuomi and Ascencao 2023). The idea of transparency is embraced as a pillar of good governance, although in the context of sustainable tourism, this is a commonplace yearning rather than an attainable or binding notion or action. According to Roxas, Rivera and Gutierrez (2020), transparency fosters the legitimacy of institutions since key decisions regarding the development of tourism and its environmental effects become available to stakeholders. Similarly, Tuomi and Ascencao (2023) consider it critical to fairness and accountability, in line with Sustainable Development Goal 16 on strong institutions. In practice, transparency is able to conceal unequal power relations between government, platforms and communities. Bramwell and Lane (2022) argue that increased data visibility can rarely guarantee hours of participatory governance or equitable gains since much of the interpretative power is usually concentrated with those choosers in the spotlight. Therefore, transparency in sustainable tourism must be actively reconceptualized as an ongoing process of negotiated responsibility and not as an open dynamic state of being transparent.

Governance of participatory tourism is based on participants (States, communities, and platforms) making a joint decision, transparent information flows provide equal dividends and ensure long-term legitimacy (Kim and Lee 2022; Petrick 2021). Collaborative tourism is based on the mutual decision-making between the governments, individual platforms, and the local people, but there has been no transparency in such interactions. According to Kim and Lee (2022), the open flow of information leads to better understanding and legitimacy, and Petrick (2021) connects transparency in communication with fairness and responsibility. Such ideals are not achieved in reality. Services such as Airbnb tend to suppress some of the most important aspects of operations, preventing the community from measuring local effects (Guttentag, 2020). This lack of information is invalid to the tenets of participatory governance. In addition, since transparency can also be used as a surveillance mechanism, as Tuomi and Ascencao (2023) observe, the practice is disproportionately focused on community initiatives, but not large businesses. In the absence of these structures, participatory tourism might be just superficial, interacting at a facade of participation yet cementing current power sovereignties and tokenistic stakeholder engagement. The disparity in the application of transparency occurs because of the information asymmetry, the lack of transparency in the algorithms, and inadequate engagement with the community (Dangi & Petrick, 2021). Transparency, though a key element of sustainable tourism, is application-wise still misaligned and disjointed. Dangi and Petrick (2021) note that information asymmetry is probably one of the hurdles since communities usually do not have access to the data and the tools that policymakers use to analyses information or digital platforms. The opaqueness of the algorithmic systems exacerbates this imbalance as it removes the means through which tourism-related decisions are made (destination ranking or destination price) (Buhalis and Sinarta, 2019). Furthermore, involvement by communities is mostly superficial, that is, in terms of consultation and not in governance. Hall (2020) condemns these tokenistic practices as transparency may be symbolic, which is exploited to legitimize the current power systems instead of empowering the stakeholders. Participatory governance should give priority to mutual understanding and collective supervision of data-based systems in the quest to address fairness and procedural justice in tourism. While these ideals remain aspirational, digital accountability provides a critical pathway to operationalizing fairness and legitimacy within sustainable tourism governance.

XAI as a Catalyst for Transparent Governance

Explainable AI (XAI) interprets the choices made by robotized systems in digital tourism, meaning that the systems must comply with the principles of fairness and responsibility (Xu, Wang and Kim 2025; Jin et al. 2023). The Explainable Artificial Intelligence (XAI) is a considerable milestone in aligning automation and ethical governance in tourism. According to Xu, Wang and Kim (2025), XAI refers to computational processes which can be represented as algorithms to be comprehensible to non-technical end-users, which is crucial in the field of recommendation systems and dynamic pricing. Although it is theorized that XAI makes decisions fairer because it exposes the decision rationales and allows one to identify the bias, these allegations are questionable. Cheong (2024) claims that XAI tends to provide purely technical transparency and does not guarantee the accountability socio-politically. Describing an algorithm should not in itself be seen as one that is more inclusive, or just because system design is usually corporate-driven as opposed to community priorities (Jin et al. 2023). Therefore, the actual worth of XAI in tourism management lies in its integration into larger systems that tackle cultural, ethical, and power aspects of the use of technology.

The use of XAI in decision-making promotes auditing and minimizes bias and, therefore, enhances the confidence of stakeholders in AI-based platforms (OECD 2024b; Bingol and Yang 2025). The prospect of contending with the state of AI to improve the level of transparency is the potential of Explainable Artificial Intelligence (XAI) to facilitate the systematic audit of AIbased tourism platforms. OECD (2024b) emphasizes explainability as a key to responsible AI, which contributes to minimizing bias and developing a sense of trust in the stakeholders, whereas Bingol and Yang (2025) identify it as a component involved in participatory oversight. However, the empirical findings on such practices are scarce because platforms tend to disclose algorithms due to proprietary issues (Jin et al. 2023). Explanations are, in most cases, too technical even when made, and this poses an interpretability gap, locking out non-experts (Xu, Wang and Kim 2025). As a result, even though XAI could be as accountable, its practical implementation remains limited by institutionalization and profit-seeking initiatives of digital capitalism that undermine sincere transparency.

The application of XAI leads to the achievement of success when well-established data governance and clear accountability structures are in place to transform transparency into procedural fairness (Cheong 2024). The success of the Explainable Artificial Intelligence (XAI) depends on its involvement in solid data management systems. Cheong (2024) argued that having explainability will never be equitable unless it is supported by accountability frameworks to operationalize transparency into procedural justice. In the absence of such mechanisms, the risks in XAI have been turned into transparency-washing, through which the pretense of openness hides structural inequalities. Similarly, scholars like Hall (2020) and Tuomi and Ascencao (2023) argue that technical transparency hardly changes the distribution of power in decision-making in the tourism industry or addresses systemic problems in it. To realize effectively its role in sustainable governance, XAI should be integrated into participatory data models that have a sense of ethical stewardship and community control. However, these foundations of governance are still fragile with the adoption of AI (exceeding regulation). This means that the democratic potential of XAI is more of a theory, unless repackaged in terms of socio-institutional procedures, which are based on power relations.

Interactions and Outcomes

Transparency and trust support one another, affecting the perceptions of tourists towards authenticity and overall satisfaction (Dangi & Petrick, 2021; Singh, Lee and Tsai, 2025). Transparency and trust relationship is one of the determinants of authenticity and satisfaction in tourism experiences. According to Dangi and Petrick (2021), trust can be improved with clear communication, leading to genuine interaction and attraction of a visitor, whereas Singh, Lee and Tsai (2025) state that experienced transparency in the relationship mediated by AI boosts trust in the platform and destination. Nevertheless, such a relationship is not always a linear one. Credibility and history may ensure more trust than the amount of information disclosed (Mayer, Davis and Schoorman, 1995). Transparency, which is too technical or unframed, may even destroy trust and turn out to be manipulative. Also, the element of trust is culturally and contextually determined and causes a difference in the reception of transparency signals by tourists (Buhalis and Sinarta, 2019). Therefore, transparency in itself is not sufficient to produce trust; it has to be effective in terms of the quality of communication, cultural sensitivity, and the sense of fairness in the interaction, but not the presence of disclosure.

In the case of transparency and trust institutionalized, they support responsible tourist behavior that is based on sustainability goals and reduces opportunistic desistance to greenwashing (Li et al. 2025; Wasaya, Prentice & Hsiao 2024). The effect of institutionalizing transparency and trust is that it impacts sustainable tourist behavior through ethical decision-making and accountability. Li et al. (2025) argue that transparency in reporting about environmental practices drives tourists towards making decisions that align with the goals of sustainability, whereas Wasaya, Prentice and Hsiao (2024) observe that credible reporting reduces skepticism and lowers greenwashing. Nevertheless, a connection between transparency, trust, and sustainable conduct is complicated. Transparency can increase environmental accountability, but it can be applied without purpose to gain marketing benefits, generating symbolic, not practical sustainability (Font and McCabe, 2017). Thus, transparency should be translated into accountability by the institutional structures, like third-party audits, participatory certification systems, etc. In the absence of such mechanisms, transparency and trust can be turned into a show business of selling sustainability discourses instead of developing genuine ecological ethics in tourism governance.

Transparency, trust and explainable artificial intelligence (XAI) housed within an integrative framework can enhance legitimacy, equity, and resilience in the process of governance of participatory tourism (Bingol & Yang 2025). With transparency, trust, and Explainable Artificial Intelligence (XAI) being pursued on the same platform, a subdued opportunity for equitable and sustainable regulation of tourism stands. Bingol and Yang (2025) assert that when socio-technical transparency exists, including institutional mechanisms of trust, then the legitimacy of the procedure and the moral flexibility of participative tourism are enhanced. However, this integration is largely paper-based, in which recent research views transparency, trust, and XAI as unmaintained constructs, rather than reinforcers of each other. Such discontinuity limits the ability of the governance models to address the systemic challenges, including using an algorithm that is biased, with uneven access to information, and being sidelined by a community. Moreover, to be sustainable in the implementation of XAI, it is necessary to have permanent monitoring, simultaneous performance of its areas, and a reactive government, which cannot yet be established in most tourism systems. Thus, the literature identifies the opportunities of the framework, yet notes that it remains idealistic with no systems of considered fairness and inclusiveness.

Conclusion:

This review highlights that transparency, trust, and Explainable Artificial Intelligence (XAI) are critical components for achieving accountable and participatory tourism governance. While XAI can improve algorithmic transparency and stakeholder confidence, its effectiveness depends on strong institutional governance and inclusive data accountability frameworks. Integrating these elements within a socio-technical governance approach can enhance legitimacy, fairness, and sustainability in digital tourism systems.